By Michael Wolf

For most of my adult life, doing things around the home meant pushing buttons and turning dials. In fact, for almost everyone who isn’t a teenager or younger, the process of cooking, making coffee or doing laundry meant using mechanical controls.

But that’s beginning to change. Touch screens have become massively popular over the past decade, and more recently, natural language interfaces like voice recognition have captured the imagination of consumers. There’s no doubt physical interfaces are beginning to give way to digital ones.

Consider Alexa. Consumers use Amazon’s new voice assistant technology to not only play music or ask about the weather but also to cook, shop and start the wash cycle on that load of laundry. And as consumers have embraced Alexa and its physical manifestation in the Echo, companies such as Whirlpool, ChefSteps and others have rushed to create Alexa skills, voice commands that work like an application for a specific device. The Alexa skill universe has doubled in size over the past six months to more than 7,000, a growth rate reminiscent of the iPhone app marketplace a decade ago.

But Alexa is only the beginning. Other companies are exploring voice, motion-sensing and bio-authentication interfaces, as well as plugging their products into smart home and Internet of Things (IoT) platforms for integrated access and control of their products.

This shift toward new interfaces has changed how product designers create products. As new interfaces such as voice and touch become more commonplace, companies find that not only can they significantly differentiate their products through the effective use of user-interface design principles, they can also create entirely new usage and business models.

Trends in the Future of Interfaces

What does the future world of interfaces look like? Below are a few trends we can expect to see in user- interface design over the next decade.

Interfaces will become less visible over time. While it’s safe to say user interfaces won’t completely disappear, the trajectory is toward interfaces being less intrusive and less visible. The result will be more voice and smart phone-centric interfaces for connected devices, but also more products that anticipate your needs or act through signals sent through new technology such as machine vision.

New interfaces enable new types of capabilities and experiences. By integrating entirely new ways to interface with technology, many existing application categories are reinvented while new ones come to life. Voice enables new ways to interact with technology, such as using voice as an authentication and command interface for IoT. Interfaces also make our surroundings more accessible; the elderly and people with disabilities are given ‘virtual limbs’ in the form of voice assistants to better control the world around them.

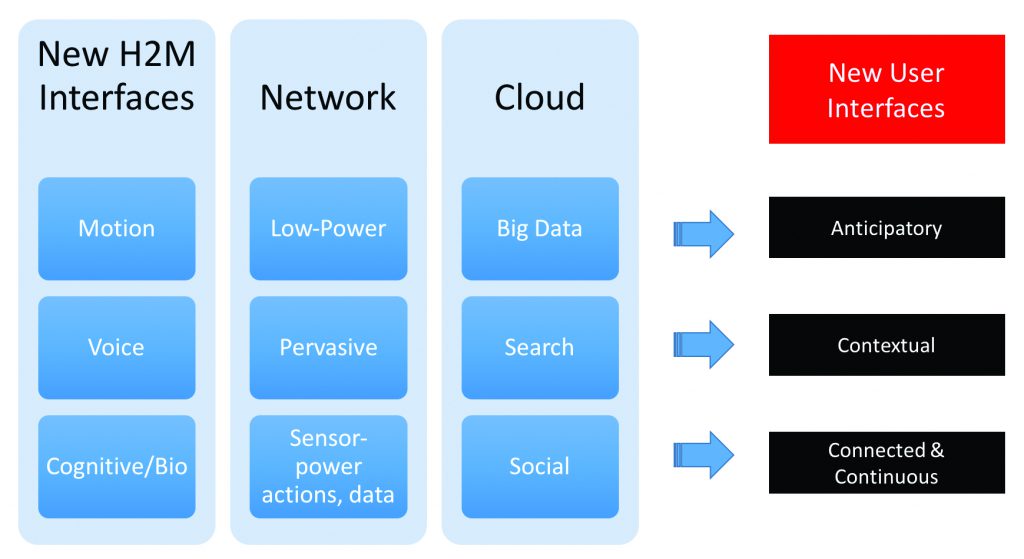

Interfaces lead to new ways to experience technology. A confluence of new technologies such as big data, cloud, anticipatory computing and social media are combining with new interfaces to create entirely new interaction experiences that are continuous, contextual and anticipatory.

The Changing Experience of Human to Machine Interfaces

So what does all this mean for us, the consumer? Are we headed toward a world where everything is controlled by our voice, where buttons and dials become a thing of the past?

The good news is no. The reality is physical controls are still extremely useful and important. They’re intuitive, people understand them and they often are more reliable.

But as we embrace interfaces that are natural extensions of ourselves, whether that means using our voice, simple motions or bio-authentication as a way to interact with the world around us, what does that mean? Simple: It makes product experiences more anticipatory, contextual and continuous.

Anticipatory. Technology experiences are becoming more anticipatory. This means they are increasingly predicting a user’s needs and will serve them without requiring a conscious action from the user. Google Assistant is an example of a product that is developed specifically to create anticipatory experiences, but nearly all technologies will become more anticipatory in the future by better understanding the user through a number of inputs (social, sensory, etc).

Contextual. New interfaces can provide information about a person’s marital status using big data from social inputs, past behavior and a sensory understanding of a person’s surroundings, which creates a more fine-tuned experience than the generalized, one-size-fits-all technology experiences of the past. One example of a contextual experience would be a Netflix-like recommendation algorithm that considers a person’s mood, their activities up to the point of the day (or what they plan on doing later), who is in the room with them and what may have happened in the world that day.

Continuous. Technology experiences often are isolated from one product to the other. This means as the user passes from one life zone to another (car to home to public space to work), the new experience isn’t reflective of the previous one.

But that will change. Imagine listening to the sports game on the radio and then walking into the house and the TV turns on the game at the point where you left off on the radio. In the future, technology-driven experiences will be more continuous as a result of pervasive, connected networks and cloud computing.

Long-Term Products Will Become More Intuitive

Today we are in an age of adjustment and learning. However, as consumers become comfortable interacting with our products through platforms such as Alexa, products powered by new interfaces will simply become more intuitive. Generational differences will continue to persist for some – for example, some elderly have difficulty grasping the concept of touch screens on smartphones or tablets – but these will dissipate as consumers begin to adjust and new interface technologies become refined and more pervasive.

Ten years from now, no one will think twice about products that not only better understand us but will do what we want without us having to lift a finger.